Wireguard

Posted: 2019-06-26 08:38:57 by Alasdair Keyes

Last year I made a post on Wireguard and wrote a Nagios plugin to allow monitoring of connected peers. I mentioned that I would likely do a post about my thoughts on Wireguard later, and here it is...

Before I begin, this isn't a copy and past piece about how it's a slim code base and listing off the encryption algos, that is all important, but is covered in-depth in every article about Wireguard on the internet. This is viewed from a more user/admin point of view.

I will also refer to Server/Client paradigm however Wireguard seems to only operate on the idea of Peers, essentially, a "Server" would be a server with lots of peers connecting and routing traffic through it and a "Client" would be a Peer that connects to a single (or limited number) of peers and routes some/all of it's traffic across the interface.

It should be noted that this is tested using Debian Linux. Wireguard is available for lesser operating systems.

The Good

- Clear and limited encryption

Moving on from the fact that I won't just list off the protocols it uses internally, Wireguard's use of limited encryption algorithms, ciphers etc means that as a sysadmin, I know that I can not actively downgrade or harm my VPN's security.

With tools like OpenVPN, having a range of ciphers and ability to choose different key lengths is good, but at some point I will forget to update these and eventually be running it with a key size that's too small or a cipher that has a known flaw. Large companies may security review their setups regularly but small companies or personal users will most likely not.

This does lead to the potential problem of one vulnerability potentially affecting all Wireguard installs due to similar configuration, however this can occur with any software and I don't have to worry that my lack of knowledge or ability are actively making the tunnel less secure than it should be.

Other nice extras are that Wireguard operates on asymmetric cryptography with public/private keys but also gives the option of a pre-shared key per-client for extra security (especially for say post-quantum world) and it also offers Perfect Forward Secrecy (PFS) so even if private keys are leaked previous session data is still secure.

- Ease of end user configuration.

The client (or 'peer' in Wireguard parlance) configuration file is very light weight. Often less than 10 lines of config, Private/Public and optional pre-shared keys are all included in-line in the file and are very small. No more need to hand out CA certs, private keys etc on top of config files to users.

The config file can also contain PreUp, PostUp etc. type commands to enable firewall changes or other relevant tasks that should be performed so you don't have to find ingenious ways of hooking it in with other things on your system.

Versions of Gnome Network Manager have support for Wireguard making configuration even easier for the non-tech savvy.

- Tooling

This part is quite impressive and well thought out. Wireguard config is stored in a single file and can either be edited directly in the file if the interface is down, or configured in realtime using the wg tool when the interface is up (You have the option as to whether these changes are persisted or temporary until the interface is brought down).

The tools and man pages have great detail and are easy to follow and the general amount of limited options mean that there's not too much to get wrong with configuration.

There is also a wg-quick tool which will bring up interfaces and configure default routing for you too.

Having the functionality for editing config via CLI is great for automation. I built a puppet module this weekend to configure a Wireguard server and the wg and wg-quick tools were invaluable.

The Bad

- Security reviews

As far as I know Wireguard hasn't been security reviewed. This is not surprising, it's still in development and it takes a lot of time and effort for software to be reviewed but it will be interesting to see the results when it finally does happen.

- No definite connection numbers

Due to the connection-less way Wireguard works there is not defined list of peers that are connected/unconnected. The server knows how long it has been since a handshake has occurred and started a new PFS session with peers but not if a peer is actually connected. This is also in part due to the use of UDP (Tunnelling TCP over TCP has some problems so UDP is best here). Connection information can be extrapolated (as the Nagios plugin does), but it would be nice to know how many connections there are. Connection numbers can be a good way of knowing early on if there are any problems.

- 'Nameless' peers

When viewing the output of Wireguard's configuration all peers are defined only by their public key. This is good for providing some level of anonymity, but if you were running a large organisation with a lot of Wireguard peers, it would be handy to have a nice-name field to indicate either a particular real-world person or perhaps the data-centre that is on the other end of the interface. This can be added into the config file as a comment, but it would be nice to see it added as an optional extra in the config.

The stuff I'm too lazy to properly look into

Wireguard is touted as being very fast due to both it's slim code and the way it's designed to operate.

My VPN servers generally don't have too many users so I can't make a direct useful comparison. The Client's network speed seemed neither faster or slower than an OpenVPN connection. If I had done some in-depth checks I may have seen a reduction in CPU/RAM/Network use, but really, who has the time?

All in all I like Wireguard and plan on moving to it soon. Maybe in tandem with my existing VPN software until I have confidence that it is suitable.

I've written a puppet configuration to roll out once I'm ready. The only thing holding me back is waiting for my desktop OS to ship Network Manager with a Wireguard plugin so I can play nicely with my general network configuration.

There are a million articles on line for how to get Wireguard up and running and if you use VPNs I would suggest at least looking in to it.

If you found this useful, please feel free to donate via bitcoin to 1NT2ErDzLDBPB8CDLk6j1qUdT6FmxkMmNz

PHP Design Pattern Code Implementations

Posted: 2019-03-15 22:06:15 by Alasdair Keyes

I was refreshing my memory on the Bridge pattern (https://en.wikipedia.org/wiki/Bridge_pattern) for some code I was writing and I came across this Github repo https://github.com/domnikl/DesignPatternsPHP with PHP implementations of many common design patterns.

It's well worth bookmarking for when you need to brush up or even use as a framework for implementing them.

If you found this useful, please feel free to donate via bitcoin to 1NT2ErDzLDBPB8CDLk6j1qUdT6FmxkMmNz

Updating Skills

Posted: 2019-02-15 15:51:23 by Alasdair Keyes

Anyone who works in the modern corporate environment is well aware that the landscape changes quickly and that it's very easy to become complacent, resting on previous experience and not updating your skill-set for the future.

This is why I have undertaken the Montana Fish, Wildlife and Parks bear identification test to hone my ursine identification skills. With a quite staggering 86.7% accuracy, I am able to identify the difference between the types of bear found in Montana and surrounding states.

I have never been to Montana, I have no plans to visit Missoula or Great Falls. But I know that should I ever get caught in the North-Western wilderness of the United States I will be able to fall back on my above-par identification skills.

If you found this useful, please feel free to donate via bitcoin to 1NT2ErDzLDBPB8CDLk6j1qUdT6FmxkMmNz

Computerphile

Posted: 2019-01-19 16:01:55 by Alasdair Keyes

I think I've linked to Computerphile videos in the past, but they definitely deserve it's own post.

Computerphile is a Youtube channel created (I believe) some staff/faculty at Nottingham University.

They release regular videos for both beginners and experts, explaining everything from how recently announced vulnerabilities work, through to low-level assembly and CPU operations. It's taught me a lot e.g. how Face ID works and how double-ratchet message encryption is employed to keep tools like Whatsapp and Signal secure, to name just two.

If it's the kind of thing that tickles your fancy it's quite a rabbit hole so wait until you have a spare Sunday afternoon and get to it.

If you found this useful, please feel free to donate via bitcoin to 1NT2ErDzLDBPB8CDLk6j1qUdT6FmxkMmNz

RedirectToken for Laravel

Posted: 2019-01-17 17:01:47 by Alasdair Keyes

A while back I wrote a library to easily allow creation of redirect URLs on the fly called redirecttoken https://gitlab.com/alasdairkeyes/redirecttoken/.

It was a cleaner implementation of a setup I used on this site to see what links visotors are clicking.

After I migrated my site from Slim to Laravel about 2 months ago, I created a Laravel Service Provider to easily port this functionality into my new site. This has also just been released at https://gitlab.com/alasdairkeyes/redirecttoken-laravel/.

A basic config is configurable through the .env file so no need for any additional coding to add it to your Laravel install.

Both packages are on Packagist and can be installed using composer.

If you found this useful, please feel free to donate via bitcoin to 1NT2ErDzLDBPB8CDLk6j1qUdT6FmxkMmNz

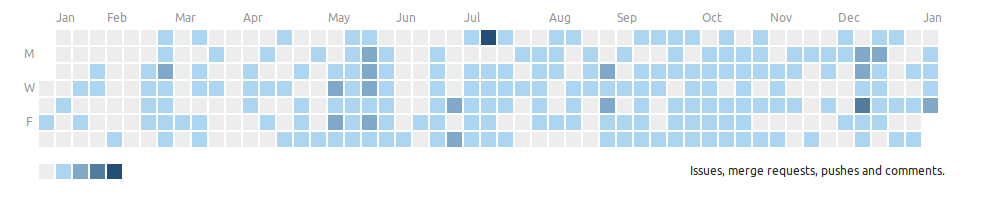

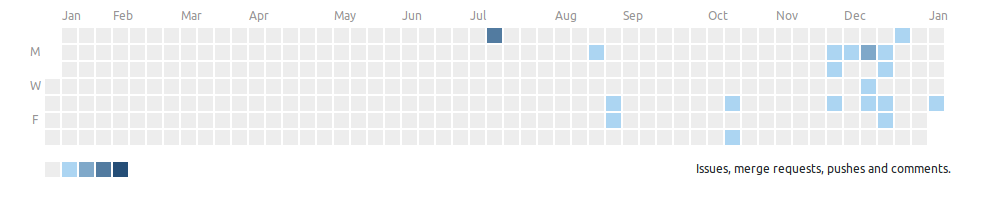

Gitlab Private vs. Public Heatmaps

Posted: 2019-01-10 15:51:18 by Alasdair Keyes

My Gitlab public vs private heatmaps. It looks rather pitiful but I promise, I have been coding!

** Private Heatmap **

** Public Heatmap **

I've got a few more OS projects in the pipeline so this should look a bit healthier

If you found this useful, please feel free to donate via bitcoin to 1NT2ErDzLDBPB8CDLk6j1qUdT6FmxkMmNz

Fun Linux Videos

Posted: 2018-11-25 23:31:45 by Alasdair Keyes

I'm not quite sure how (I think it was probably via Hacker News) but I came across the Youtube channel of Bryan Lunduke. https://www.youtube.com/user/BryanLunduke/

I don't really have any idea who he is, but he apparently gives a lot of talks at Linux North West conferences in the US. They're mainly a lighthearted and humourous look at Linux/Operating Systems and software in general. This includes what appears to be a decade long series of talks entitled "Linux Sucks".

As this weekend was fairly slow I got through a few of his videos, some of which I've listed below. Well worth checking out.

- https://www.youtube.com/watch?v=TVHcdgrqbHE - Linux Sucks Forever

- https://www.youtube.com/watch?v=UjDQtNYxtbU - The complete history of Linux (abridged)

- https://www.youtube.com/watch?v=_e6BKJPnb5o - programmers_are_evil()

- https://www.youtube.com/watch?v=tabVaoeNtdk - They're watching you

- https://www.youtube.com/watch?v=xPbAXKMCDkY - Linux is freaking weird

If you found this useful, please feel free to donate via bitcoin to 1NT2ErDzLDBPB8CDLk6j1qUdT6FmxkMmNz

The Future Is Bright

Posted: 2018-10-22 15:57:09 by Alasdair Keyes

Just what every right-minded developer has wanted. Perl in the browser!

If you found this useful, please feel free to donate via bitcoin to 1NT2ErDzLDBPB8CDLk6j1qUdT6FmxkMmNz

Advertising and Malware block list

Posted: 2018-09-28 08:59:15 by Alasdair Keyes

Via a Hacker News article https://news.ycombinator.com/item?id=18075159 I came across a link to this git repo https://github.com/StevenBlack/hosts .

It's a curated list of known advertising and malware hostnames that can be added to your system hosts file to protect your system from shady sites accessed by your browser as part of included javascript/tracking pixels etc. As long as your browser still uses your system resolver instead of being set to DNS over HTTPS it should provide a good degree of security for your browsing.

If you found this useful, please feel free to donate via bitcoin to 1NT2ErDzLDBPB8CDLk6j1qUdT6FmxkMmNz

Mojolicious v8 release

Posted: 2018-09-19 11:14:22 by Alasdair Keyes

Perl 5 is often seen as a dated, if not dead language, consigned to being hacked together by a sysad to keep some legacy platform from the 90s ticking over.

Sadly that part is true, but there is still life in the old dog yet, helped by the recent release of version 8 of the Mojolicious framework.

If you've not played with Perl but you have an interest, Mojolicious is a good entry point and I'd recommend it for any new projects. It's built in support for web-sockets makes it very suitable for modern back-end platforms.

If you found this useful, please feel free to donate via bitcoin to 1NT2ErDzLDBPB8CDLk6j1qUdT6FmxkMmNz

© Alasdair Keyes

IT Consultancy Services

I'm now available for IT consultancy and software development services - Cloudee LTD.

Happy user of Digital Ocean (Affiliate link)